Predicting Image Quality and Aesthetics from EEG

Author

Yelyzaveta Razumovska

Queen’s University Belfast

Victoria Porter

Queen’s University Belfast

Project Description

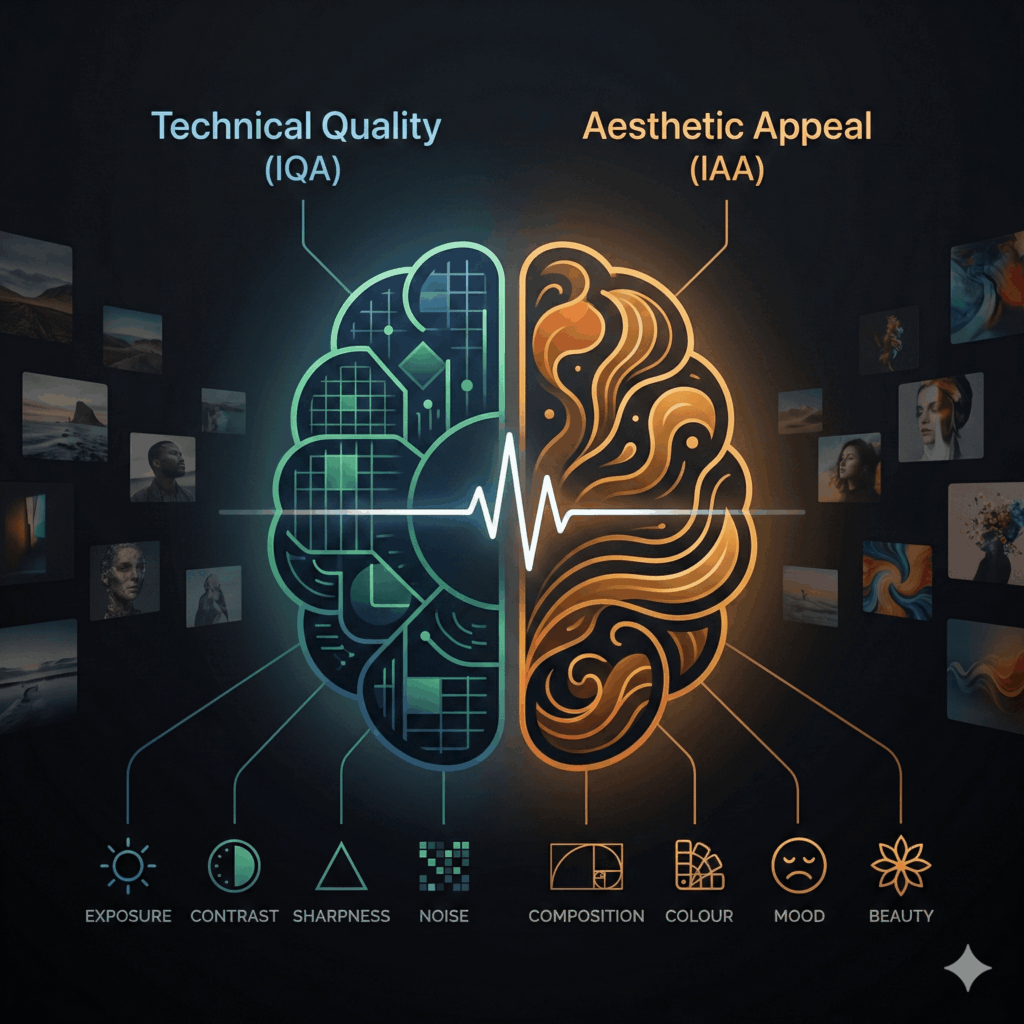

When we look at a photograph, our brain simultaneously processes its technical quality (exposure, contrast, sharpness, noise) and aesthetic appeal (composition, mood, beauty). Image quality assessment (IQA) and image aesthetic assessment (IAA) are computational equivalents of these two judgements. This project will investigate whether neural responses to images encode both these perceptual dimensions and whether they share common neural mechanisms. Previous work suggests that IQA and IAA rely on overlapping perceptual features but differ in their level of abstraction and cognitive processing, with technical quality being more closely related to low-level visual factors and aesthetics to higher-level interpretation.

Questions we aim to answer:

- Do EEG responses correlate with image quality and aesthetic scores?

- Can we build models that predict IQA or IAA from EEG features?

- Do images with low IQA and IAA scores evoke convergent neural signatures in early EEG components (P1, N1) but divergent signatures in later components (P300, LPP), reflecting shared perceptual mechanisms but different higher-order evaluation? (advanced)

During the hackathon, we will calculate image quality and aesthetic metrics for visual stimuli used in the EEG dataset. We will then explore how these scores relate to EEG features by analysing correlations, creating exploratory predictive models, and visualising neural signatures associated with different levels of technical quality and aesthetic appeal. A secondary objective that relies on the results of the previous two is exploratory validation of the “early vs late processing” hypothesis: whether technical quality aligns more strongly with early sensory components and aesthetics with later cognitive components.

Project requirements

- BSc (or final-year undergraduate)

- Conversational English

- Python programming

- Interest in EEG analysis

- MNE-Python or any other EEG analysis toolbox (optional)

- Familiarity with machine learning, deep learning (optional)

Programming languages used in this project

Python

Who are we looking for?

This project is very multidisciplinary and would benefit from neuroscientists, cognitive scientists, and psychologists who can help interpret EEG components and guide experimental reasoning. Visual artists could also contribute by bringing a human-centred perspective on perceptual quality and aesthetics.

What can you gain from participating?

Participants will gain hands-on experience working with a big open EEG dataset and learn practical analysis workflows using MNE-Python, including preprocessing, ERP extraction, and basic visualisation. They will also learn how to apply existing image quality and image aesthetics metrics and combine these outputs with EEG data. The project will show how statistical analysis and predictive models can be used to link brain responses to perceptual properties of images. Overall, the project will help participants understand how perceptual concepts such as “quality” and “beauty” can be defined, measured, and studied using brain data.

Key resources

- MNE-Python (https://mne.tools/stable/auto_tutorials/index.html)

- Python-EEG-Handbook paper (https://onlinelibrary.wiley.com/doi/full/10.1002/brx2.64) and repo (https://github.com/ZitongLu1996/Python-EEG-Handbook)

- Götz-Hahn, Wong & Hosu (2023). The Inter-relationship between Photographic Aesthetics and Technical Quality. (https://www.researchgate.net/publication/379379459_The_Inter-Relationship_Between_Photographic_Aesthetics_and_Technical_Quality)